The 90% Problem

$40 billion in AI spending. 95% of companies have nothing to show for it. The problem isn't the AI.

There’s a number circulating in enterprise boardrooms right now that should make every AI vendor nervous.

MIT’s Project NANDA released its “State of AI in Business” report last year. The headline finding: 95% of companies that piloted generative AI saw no measurable impact on their P&L. Not modest returns. Not returns below expectations. Zero.

Then PwC surveyed 4,454 CEOs across 95 countries. Same story, slightly different math: 56% reported zero financial return from AI investments. Only 12% said they’d achieved both cost savings and revenue growth. One in five said their costs actually increased.

McKinsey piled on: more than 80% of organizations aren’t seeing tangible enterprise-level EBIT impact from GenAI.

These aren’t fringe surveys from skeptics. These are MIT, PwC, and McKinsey telling us that somewhere between half and nearly all companies investing in AI are getting nothing back. The collective investment is estimated at $30-40 billion in enterprise GenAI alone.

So what’s going on?

The answer isn’t better data. It isn’t more talent. It isn’t “finding the right use case.” All three studies point to the same root cause, and it has nothing to do with the technology.

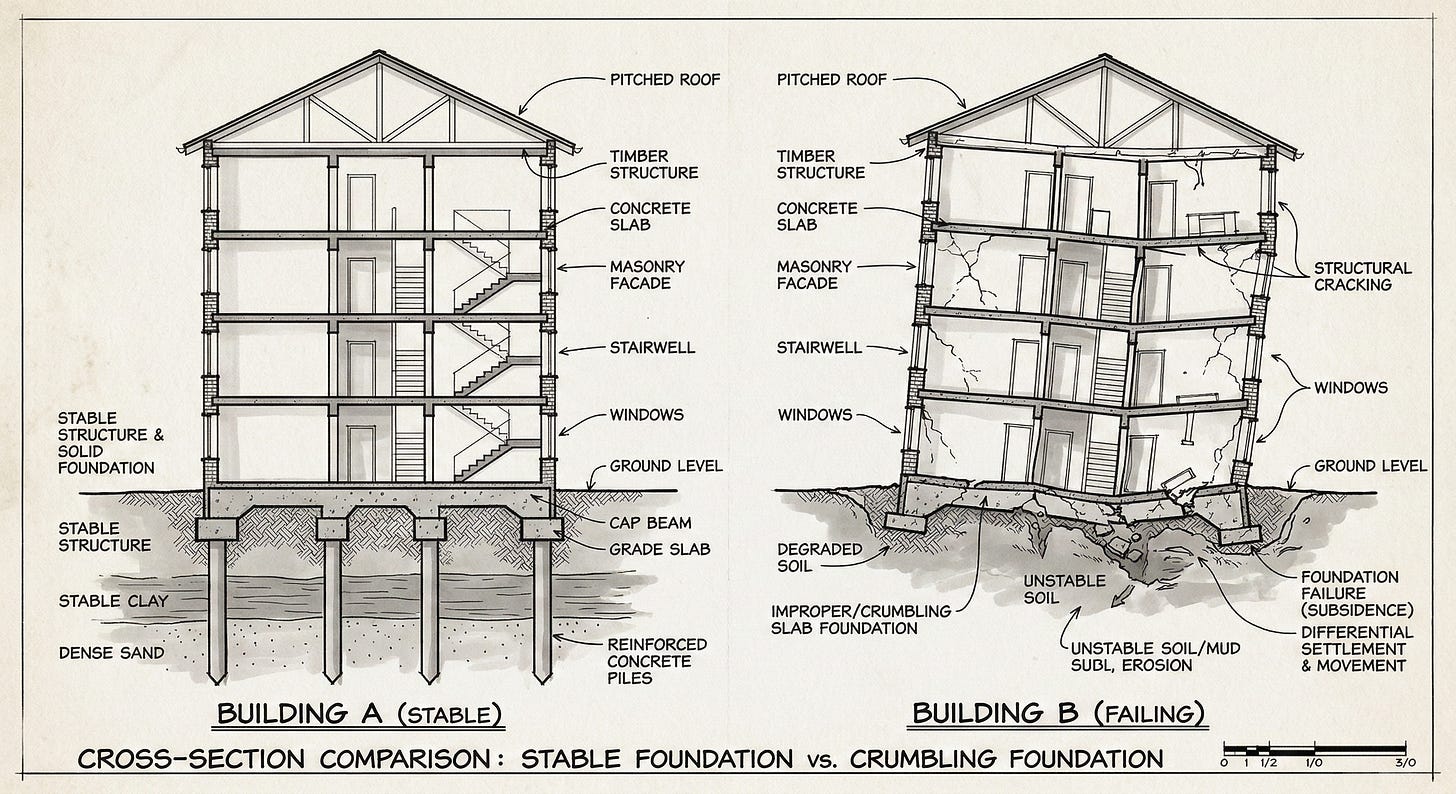

The tools work. The architecture underneath them doesn’t exist.

There is a fundamental difference between buying AI tools and building an organizational intelligence architecture. The tools are the easy part. The hard part, the part that the 95% are skipping, is the redesign of how knowledge flows through an organization. Who owns what context. How decisions get made. Where human judgment intersects with machine capability. How intelligence compounds over time instead of resetting every Monday morning.

That’s what this piece is about. Not the tools. The architecture that makes them worth buying.

What MIT Actually Found

The MIT study was explicit about the barrier. It’s not data quality, budget constraints, regulation, or talent. The dominant barrier is organizational design.

Read that again. Not technology. Not data. Organizational design.

Companies that crossed what MIT calls “the GenAI Divide,” the small minority actually generating returns, share a specific pattern: they decentralized implementation authority to domain leaders and frontline managers while retaining accountability at the top. They focused on narrow, workflow-specific use cases with clearly defined operational outcomes. And they embedded AI directly into existing tools and processes rather than building it alongside them.

The failures? They centralized AI in innovation labs, pointed budgets at broad “transformation” initiatives, and treated AI as something to bolt onto existing processes.

MIT’s central explanation is worth sitting with: most GenAI systems don’t retain feedback, don’t adapt to context, and don’t improve over time. They’re static tools deployed into static processes. No organizational memory. No feedback loops. No compounding intelligence.

This is the architecture gap. Companies bought tools and skipped the part where you redesign how information, decisions, and learning actually move through the organization. They automated individual tasks without rethinking the workflow those tasks live inside. And then they wondered why the P&L didn’t move.

The Banking Proof Point

McKinsey published a piece recently about agentic AI in banking that illustrates exactly what the architecture gap looks like in practice. They interviewed 400 bankers, relationship managers, and sales leaders. 85% said they’re already using AI in some form. Optimism was high.

But the report’s real insight was buried in the implementation section. The banks seeing actual returns weren’t the ones that bought the fanciest tools. They were the ones that reimagined entire workflows with human and AI coworkers operating together. McKinsey’s language was specific: the shift was “from automating tasks within an existing process to reinventing the entire process.”

Three new roles emerged in the banks that got it right. M-shaped supervisors: broad generalists who orchestrate agents and the hybrid workforce across domains. T-shaped experts: deep specialists who handle exceptions and safeguard quality. AI-augmented frontline workers: people who spend less time on systems and more time with humans.

The banks that failed treated AI like better software. The banks that succeeded treated it as a reason to redesign how humans and machines work together. Same tools. Different architecture. Completely different outcomes.

That’s the 95% problem in miniature. The technology isn’t the variable. The organizational design is.

What the 5% Know

So who are the 5% that crossed the divide?

MIT’s data points to three consistent characteristics, and every one of them is an architecture decision, not a technology decision.

They started narrow. Successful implementations focused on specific workflows, not broad transformation. They picked one process, redesigned it from scratch with AI as a coworker, and proved the value before expanding. The MIT study found that purchasing AI tools from specialized vendors and building partnerships succeed about 67% of the time, while internal builds succeed only one-third as often. The lesson: don’t try to build everything yourself, and don’t try to transform everything at once.

They embedded, not bolted. AI systems were designed to operate within day-to-day work, not alongside it. They were integrated into existing tools and data flows, reducing context switching. The successful deployments weren’t “AI projects.” They were process redesigns that happened to include AI.

They kept humans in the loop. Across every successful deployment, AI was designed to augment human judgment rather than replace it. Humans stayed responsible for architecture, correctness, and evolution. AI handled acceleration, suggestion, and pattern recognition within defined constraints.

This is the opposite of how most companies approach AI. Most start broad (”let’s transform the enterprise!”), bolt on (”let’s add AI to our existing dashboard!”), and automate (”let’s replace the analysts!”). They get pilot fatigue, stakeholder confusion, and zero P&L impact.

Notice what’s missing from the success criteria: nobody mentioned which model they used. Nobody said GPT-4 outperformed Claude or vice versa. The model was irrelevant. The architecture was everything.

The Foundations Problem

PwC’s chairman put it bluntly: companies seeing zero return have a “foundations” problem. Their AI investments are “isolated, tactical projects” disconnected from business strategy. Companies with strong AI foundations, Responsible AI frameworks, enterprise-wide integration environments, clear governance, are three times more likely to report meaningful returns.

This is what I keep coming back to. The foundation isn’t the model. The foundation is the map.

How does knowledge flow through your organization? Where does institutional memory live? Who is responsible for what context? How do decisions route from insight to action? What happens when someone leaves, or when you switch providers, or when the org changes shape?

If you can’t answer those questions, you don’t have an AI strategy. You have a collection of tool subscriptions. And you’re almost certainly in the 95%.

Why This Matters Now

The timing of all this research converging is not accidental. We’re at an inflection point.

Gartner predicted that by 2026, 50% of organizations would require “AI-free” skills assessments because critical thinking is atrophying. Stanford called 2026 the year AI shifts from evangelism to evaluation. Deloitte’s latest enterprise AI survey shows spending going up but ROI remaining elusive.

The era of “just buy AI tools and figure it out” is ending. What’s replacing it is a question that most organizations are not prepared to answer: How do we redesign our organization so that AI actually works?

That’s an architecture question. It’s a knowledge management question. It’s an organizational design question. It is, fundamentally, a human question.

The tools are commoditized. Claude is functionally free. GPT is functionally free. The models will keep getting better and cheaper and faster.

But knowing how to use them? Knowing how to restructure workflows, build knowledge architectures, design human-AI operating models, and create the organizational memory that makes AI compound instead of depreciate?

That’s what the 95% are missing. And that’s where the real value lives.

The Pragmatic Takeaway

If you’re a leader reading this and wondering whether your organization is in the 95% or the 5%, here’s a quick test.

Ask yourself: If I switched AI providers tomorrow, would your organization’s intelligence survive the transition?

If the answer is no, if your “AI strategy” is a collection of tool subscriptions and pilot projects that live in someone’s head, you have a tools problem masquerading as an AI strategy.

If the answer is yes, if you’ve built the knowledge architecture, the workflow redesign, the human-AI operating model that transcends any specific vendor, then you’re building something durable.

The AI is free. The architecture is what costs money. And right now, 95% of companies are spending on the wrong one.